CRITICAL CHALLENGES IN THE INTERNET OF THINGS

IoT devices are widely used and the number of such devices is still growing at a very fast pace because of their importance in ”smart everything” applications, especially the smart grid, smart homes, smart vehicles, smart cities, in industry with smart manufacturing known as Industrie 4.0, and in business and commerce with smart supply chains.

They benefit from the rapid increase of bandwidth, processing and storage capacity of the Internet, and they also benefit from the higher wireless bandwidth and lower latency that results from the transition towards 5G. However this massification of IoT devices has not gone without significant performance issues.

Indeed, groups of IoT devices which are installed mainly as sensors, but sometimes also as actuators, often “speak with” specific IoT Gateways that link them to the Edge or Cloud services which support them. Since many IoT devices themselves act together in a time synchronised manner to deliver data or receive instructions for specific actuations. Since the data they convey or receive is time-critical, it loses its value if some deadline is exceeded. Thus if IoT Gateways become congested, this can lead to the well known IoT Massive Access Problem (MAP) [1] where critical data is lost via buffer overflows or processor saturation, and excessively delayed data loses its value because it arrives too late to be of real use.

In addition, the proliferation of IoT devices and the major capacity increases in the Internet, have unfortunately also facilitated a wide variety of malicious operations and attacks. Indeed various industry reports indicate that IoT attacks in 2021 have exceeded 1 billion. Such attacks including Phishing with the objective of penetrating the IoT networks and gathering protected information, as well as Denial of Service, Distributed Denial of Service, Botnet attacks, brute force penetration attacks, and others.

Thus the IoTAC Project has successfully addressed these two critical issues by inventing novel algorithms and techniques, and testing them in realistic conditions.

SOLVING THE MASSIVE ACCESS PROBLEM (MAP)

The MAP can frequently occur even when there are no malicious attacks, and the IoTAC Project partner IITIS has addressed and studied this issue. Its solution has been proposed by IITIS through the invention of a novel method for shaping the traffic transmitted by IoT devices, that is called the Quasi-Deterministic Transmission Policy (QDTP) [2].

QDTP offers simple rules for data transmission from the IoT devices, by placing a bound on the minimum amount of time that must elapse between successive transmissions. This approach is shown to dramatically reduce congestion at the IoT Gateways for a very small additional waiting time for data at the IoT devices.

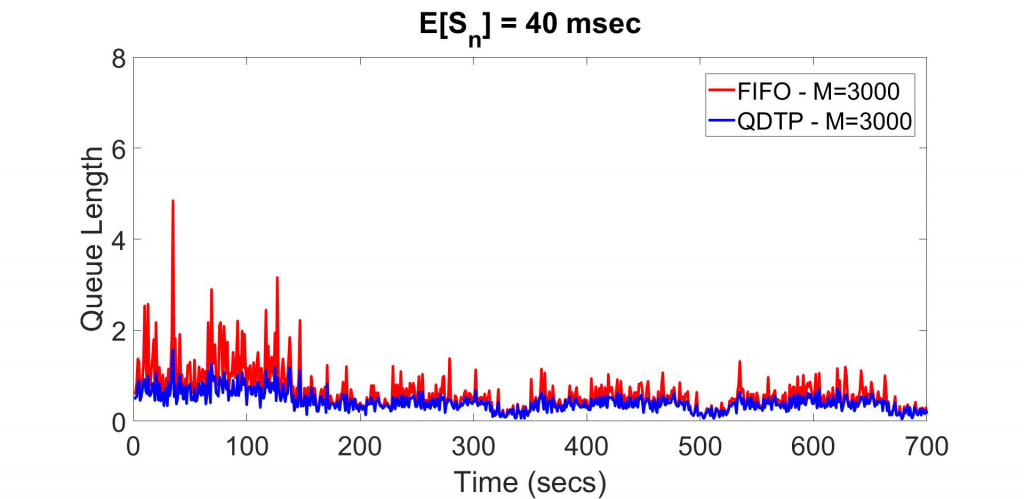

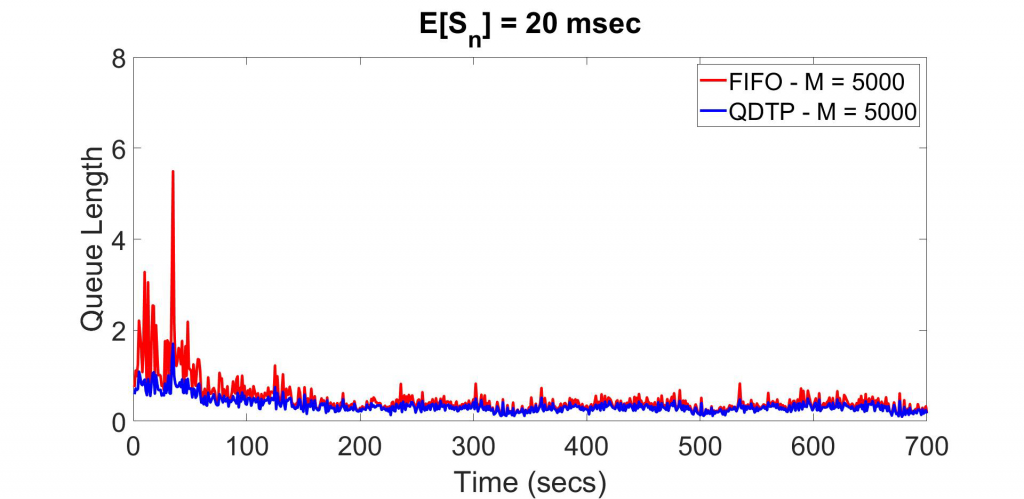

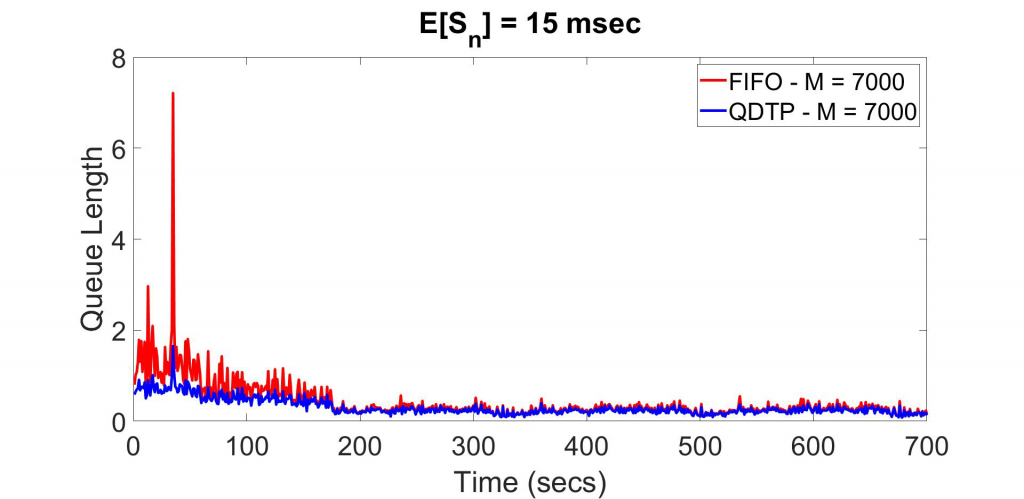

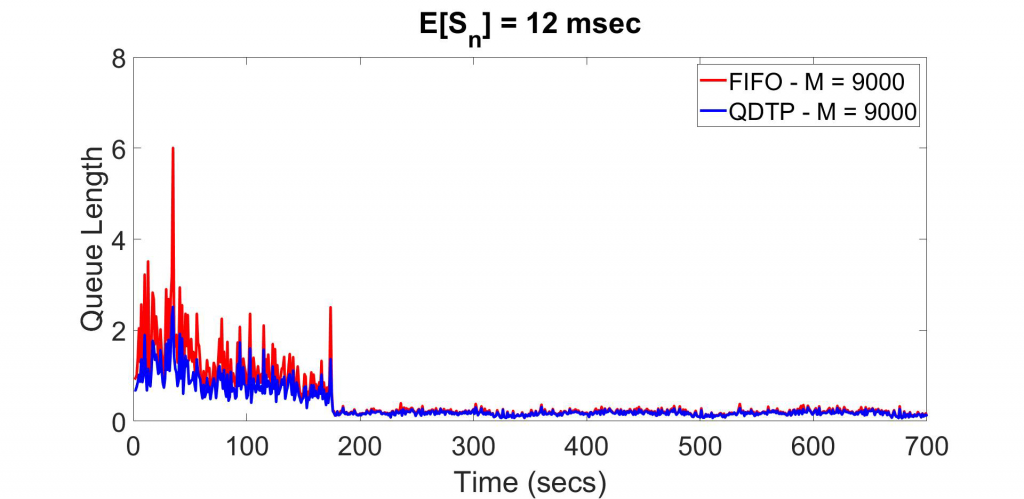

Experimental results obtained with QDTP are shown in Figure 1, where the average queue length is measured for each successive one second time slot at the IoT Gateway, and the IoT devices operate with the common First In First Out (FIFO) transmission policy used by the devices (shown in Red), and with the novel QDTP (blue) transmission policy.

The curves are plotted for a total duration of 700 seconds. They are obtained from measurements of real arrival instants in the [3] dataset, where for each value of M = Number – of – IoT – devices, the average service time at the gateway is set to value that insures that overload does not occur, namely E[S] = 40 ms for M = 3000, E[S] = 20 ms for M = 5000, E[S] = 15 ms for M = 7000 and M = 12 ms for M = 9000.

These results demonstrate the significant reduction in IoT Gateway congestion, obtained via the novel QDTP approach developed in IoTAC, in comparison to the commonly used FIFO policy.

Fig. 1. Experimental evaluation of the effectiveness of QDTP Traffic Shaping to Eliminate the Massive Access Problem.

IoT devices have been particularly vulnerable to attacks because of their simplicity and ease of connectivity. Since they are designed for ease of installation, their factory set parameters make them easier to attack and compromise. Similarly, since many of them contain very simple processing elements, and one cannot easily install sophisticated authentication and protection mechanism in most IoT devices.

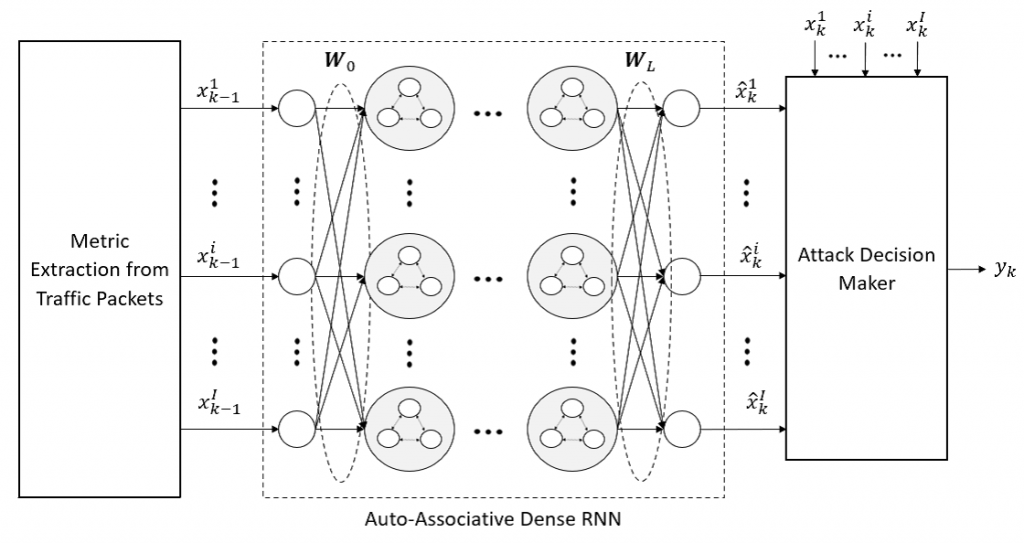

Thus the IITIS Partner of the IoTAC Project also devotes much effort to develop Attack Detection (AD) schemes for the IoT, to address various forms of attacks, and especially the most common ones or those that can be the most harmful. To this effect, we are using the Auto-Associative Dense Random Neural Network (AADRN) [4], [5] shown in Figure 2. The AADRNN is trained with normal traffic and tested with attack traffic. Its Auto-Associative structure means that it can be trained just with “normal traffic”, and it does not need to learn all the variants of attack traffic that it may encounter.

Experimental results [6] have shown its high accuracy detection of attacks with low false alarms. Compared on the same data sets other common Machine learning methods (Lasso and KNN), it was shown to have higher accuracy in general, much lower computation times than KNN and slightly higher (but comparable) computation times with respect to Lasso.

Fig. 2. Architecture of the Dense RNN based attack detector with its three modules: Metric Extraction from Traffic Packets, AA-Dense RNN and Attack Decision Maker.

Experimental Results

In order to evaluate the performance of our attack detection method, we use the Mirai botnet attack data from the publicly available Kitsune data set [7], which contains 764; 137 packet transmissions including both normal and attack traffic. We use only 70 % of the normal traffic packets for training and all of the packets (both normal and attack traffic) for the test of the attack detector.

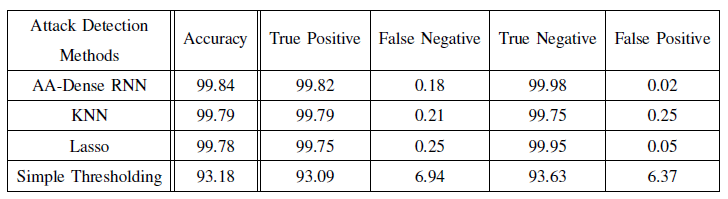

Table I compares the detection methods with respect to each of the accuracy and percentages of true positive, false negative, true negative and false positive. The AADense RNN attack detection significantly outperforms the other methods with respect to accuracy, and that it achieves 99:82% true positive and 99:98% true negative accuracy, higher than the other methods.

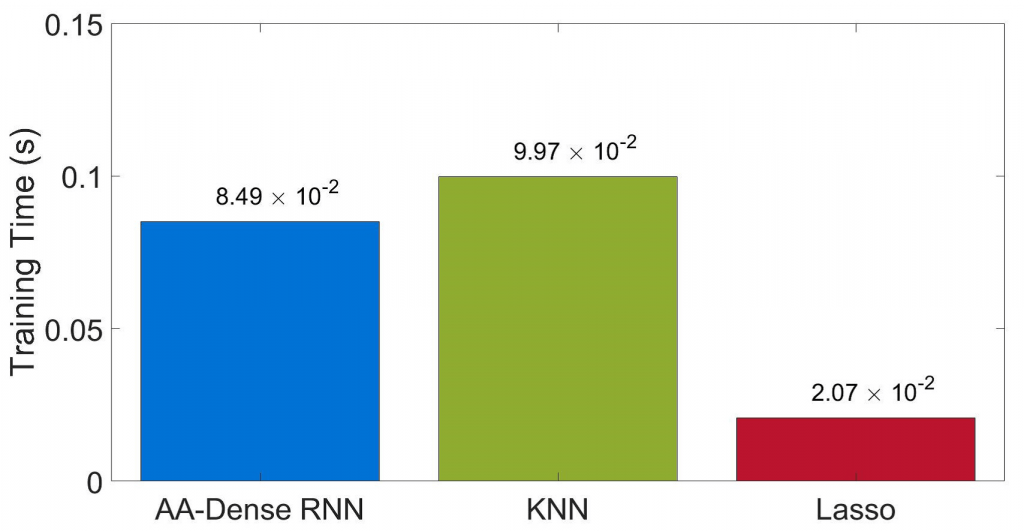

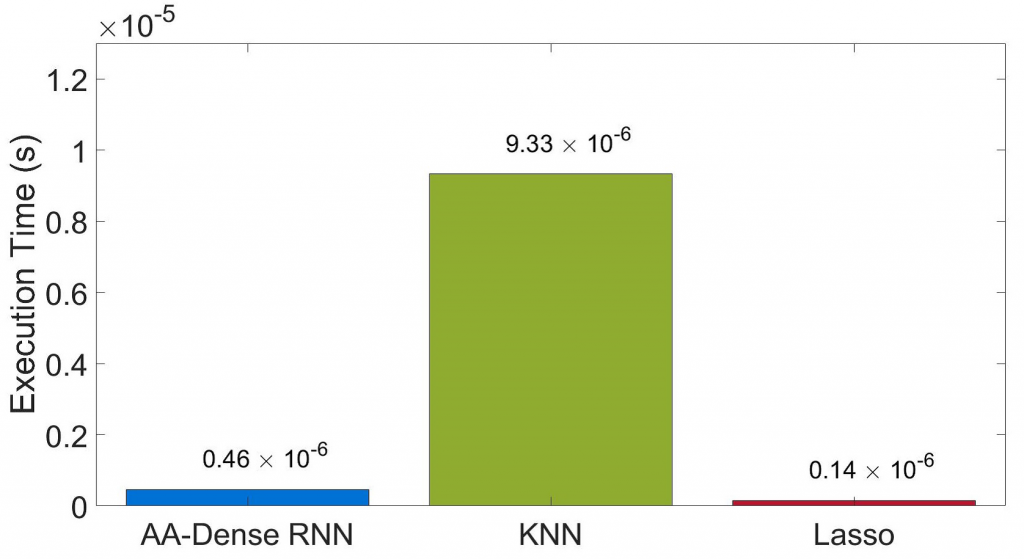

Comparing the AADRNN with KNN and Lasso with respect to the training and execution times measured on a workstation with 32 Gb RAM and an AMD 3:7 GHz (Ryzen 7 3700X) processor, we see in Figure 3 that the AADRNN sits between these other two methods.

TABLE I

COMPARISON OF ATTACK DETECTION METHODS WITH RESPECT TO ACCURACY AS WELL AS EACH OF THE TRUE POSITIVE, FALSE NEGATIVE, TRUE NEGATIVE AND FALSE POSITIVE PERCENTAGES

Fig. 3. Training times (Left) and Execution times (Right) of the different attack detection methods.

Future work will evaluate the performance of our proposed attack detector on different publicly available data sets, and integrate this particularly accurate Attack Detection tool into the IoTAC Usecases.

REFERENCES

[1] E. Gelenbe, M. Nakip, D. Marek, and T. Czachorski, “Diffusion analysis improves scalability of iot networks to mitigate the massive access problem,” in IEEE MASCOTS 2021: 29th International Symposium on Modelling, Analysis and Simulation of Computer and Telecommunication Systems. https://zenodo.org/record/5501822#.YT3bri8itmA 2021, pp. 1–6.[2] E. Gelenbe and K. Sigman, “Iot traffic shaping and the massive access problem,” in ICC 2022, IEEE International Conference on Communications, 16–20 May 2022, Seoul, South Korea, no. https://zenodo.org/record/5918301. https://zenodo.org/record/5918301 2022, pp. 1–6.

[3] TU¨ BITAK1001-118E277, “IoT Traffic Generation Pattern Dataset,” Kaggle, November 2021. [Online]. Available: ttps://www.kaggle.com/tubitak1001118e277/iot-traffic-generation-patterns

[4] E. Gelenbe and Y. Yin, “Deep learning with random neural networks,” in 2016 International Joint Conference on Neural Networks (IJCNN), 2016, pp. 1633–1638.

[5] E. Gelenbe and Y. Yin, “Deep learning with dense random neural networks,” in International Conference on Man–Machine Interactions. Springer, 2017, pp. 3–18.

[6] M. Nakip and E. Gelenbe, “Mirai botnet attack detection with auto-associative dense random neural networks,” in 2021 IEEE Global Communications Conference, vol. 2021, no. https://www.iitis.pl/sites/default/files IEEE Communications Society, 2021, pp. 1–6.

[7] Y. Mirsky, T. Doitshman, Y. Elovici, and A. Shabtai, “Kitsune: An ensemble of autoencoders for online network intrusion detection,” in The Network and Distributed System Security Symposium (NDSS), 2018.