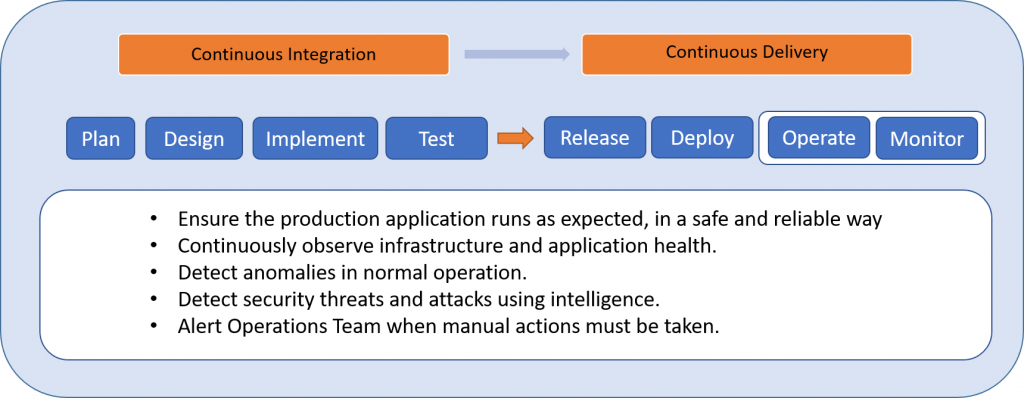

The operate and monitor phases are the final phases of the SDLC. They are collectively referred to as the maintenance phase and they are commonly thought of as a rather passive part of the process, but nothing could be further from the truth. This is where all the action is. This is where real-life threats happen, where vulnerabilities are exploited. Here, you find out exactly how secure your application is, and if you’re up to the task of responding appropriately and in time. The IoTAC’s Security-by-Design methodology offers several ways to improve security at this crucial phase of development.

Figure 1: Security during Operate/Monitor phase – IoTAC Methodology

Operate

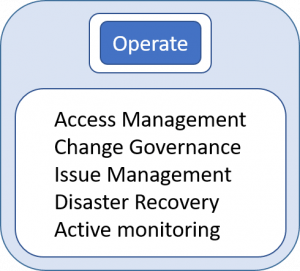

Just like in the Release and Deploy phase you should follow the principle of least privilege. Define a small group with the least access required to do the task. Use multi-factor authentication to prevent stolen passwords from being used against you.

Just like in the Release and Deploy phase you should follow the principle of least privilege. Define a small group with the least access required to do the task. Use multi-factor authentication to prevent stolen passwords from being used against you.

Employ and follow a change governance framework. This will help you avoid shortcuts in deployment and recognize environment configuration drift. These benefits are the result of a combination of release automation and carefully designed policies and permissions for infrastructure changes.

Use an issue management tool to report and track bugs and change requests. Always execute a root-cause analysis and share the results with the development team. Make sure that the tool maintains a log of changes for each release.

Figure 2: Operate phase activities – IoTAC Methodology

Prepare and rehearse for disaster recovery. Implement a backup plan for each component with special attention towards secure storage. Estimate a mean time to recovery (MTTR) and record the actual time in any real recovery event.

Perform active monitoring, if an alert is received or some risk is indicated during monitoring, perform due diligence to alleviate or minimize the impact. Periodically run manual testing tools to evaluate system health.

Monitor

Ensuring application availability and security is a complex task that includes data collection, log analysis, automated self-defence, and alerting.

Monitoring and automatic notification are a must to ensure application and infrastructure health and therefore a smooth user experience. A monitoring tool needs to collect metrics about application performance and be able to present the data graphically to quickly ascertain its status. The tool should be determined during the plan and design phases and depends on your environment. Regardless of the exact tool chosen, you should be able to collect metrics about CPU utilization, RAM usage, requests, errors, response times, and incoming/outgoing network data volume over a certain period. Based on these metrics you can create automated alerts informing the operations team and, in cloud environments, trigger automatic actions to scale the infrastructure as needed. Make sure to include logging of user activities, errors, and hardware failures. Keep in mind these logs should not contain sensitive or personal information but should allow for queries to parse the data and present it for further analysis. When you receive an error, automatically create a notification, and consider creating a ticket as well.

The network is the main way attacks are made so we have to monitor them continuously and raise an alert when suspicious activity is recognized. Such as:

- Suddenly receive a large number of requests from a specific source

- Ports scan or other discovery tools are recognized

- Requests try to leverage well-known vulnerabilities

- Intrusion detection

In addition to network monitoring, we also need a security perimeter. You can accomplish this with a Web Application Firewall (WAF). The WAF can serve as a gateway to your application and helps recognize and prevent malicious requests or Distributed Denial of Service (DDOS) attacks.

Use Runtime Application Self-Protection as a concentric ring of security inside the WAF perimeter. Since RASP runs within your application on a server it is more aware of specific application design, possible vulnerabilities, and can detect and mitigate real-time attacks. It can notify security personnel, terminate a user’s session, send the attacker messages, and in extreme cases shut down the application to avoid the system from being compromised. RASP’s contextual awareness can also reduce the number of false-positive reports from less informed components.

Vulnerability scanning is an extension of the secure hardening verification approach discussed in the Release and Deploy methodology. While scanning on release is important, it is equally important to run the scan in production weekly, and vital to act if problems are discovered.

This scan should cover:

- OS, service, platform, framework, and component patch level and known vulnerabilities.

- Configuration settings of the environment to ensure that best practices are followed.

- Endpoint security check using common and newly discovered vulnerabilities.

The real key here is to make it a habitual task to scan, evaluate the results, and correct/improve the scan as needed.

The operate and monitor phase methodology may initially appear to be a static process but it is continuous and fluid. Using the proper tools and establishing secure habits you will see your application status, understand vulnerabilities, and repel threats. Cutting-edge security demands vigilance and the IoTAC’s security approach shows you how to meet this demand.

References

- Runtime application self-protection https://en.wikipedia.org/wiki/Runtime_application_self-protection

- Operating reliable systems https://docs.microsoft.com/en-us/devops/operate/operating-reliable-systems-with-devops

- Security Content Automation Protocol https://csrc.nist.gov/projects/security-content-automation-protocol/